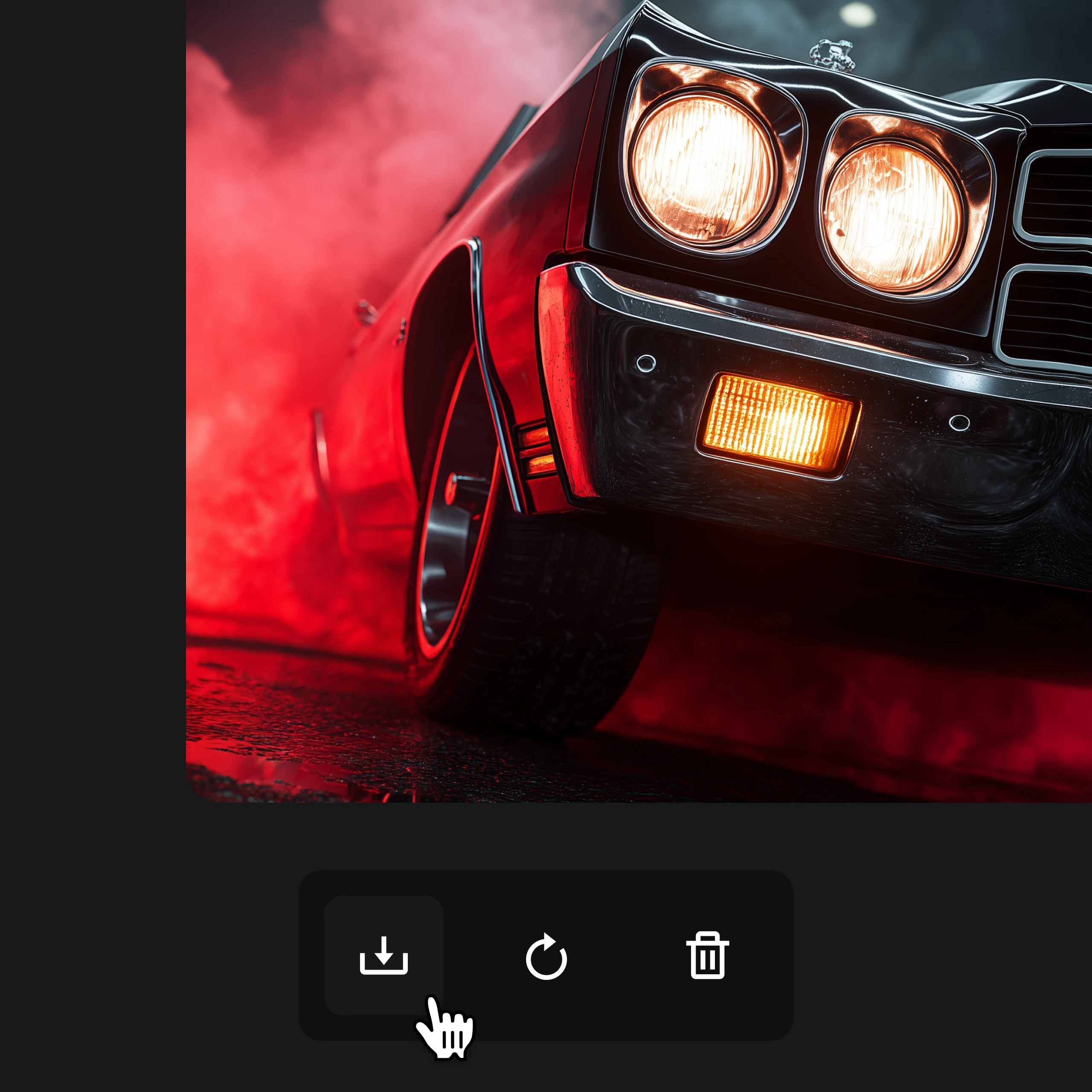

Unified Multimodal Inputs

Create with text, images, video, and audio in one unified workflow.

Seedance 2.0 is built for multimodal video creation from the ground up. Instead of relying on text prompts alone, it can combine text, image, video, and audio inputs in a single generation flow — giving creators more ways to shape motion, composition, scene direction, and overall output quality. Whether you are producing a product demo, a cinematic short, or a social media clip, the multimodal pipeline ensures your creative intent carries through from input to final render.

- Text prompts for scene direction

- Image references for visual guidance

- Video and audio inputs for richer control